The Evolution of Technology Governance in the Age of AI

Artificial intelligence has shifted from being an experiment in innovation labs to becoming a core part of how businesses operate. Especially in manufacturing and industrial settings, AI is now used for quality assurance, predicting maintenance needs, improving supply chains, boosting workforce efficiency, and automating tasks intelligently.

However, as AI becomes more widespread, concerns about accountability, ethics, transparency, cybersecurity, and business risk also grow. The standard IT governance approaches focused on system ownership, controlling data, and managing changes are no longer enough by themselves. Today, with AI, organizations face new challenges like probabilistic decisions, autonomous systems, continuous learning from data, and greater regulatory and reputational risks.

Updating technology governance for AI isn't about imposing stricter controls; instead, it's about modernizing oversight so innovation can flourish responsibly. This ensures that AI delivers real value while protecting trust, meeting compliance standards, and maintaining human supervision.

From IT Governance to AI-Enabled Digital Governance

Traditional IT governance frameworks like COBIT, ITIL, and ISO 27001 were created for deterministic systems, that is, applications that function predictably after deployment. However, AI systems operate differently:

- These systems continuously learn and adapt as time progresses.

- Results may differ depending on contextual factors.

- The performance of models is significantly influenced by the quality of input data.

- Unexpected bias and model drift can arise without warning.

- Responsibility for outcomes may extend across several organisational functions.

This shift requires governance models that are:

- Focus on adaptability instead of remaining static

- Emphasise cross-functional collaboration over IT-centric approaches

- Prioritise data-driven methods rather than relying on systems alone

- Connect value to outcomes instead of merely linking it to projects

Organisations that approach AI as a conventional IT resource may underestimate associated risks and fail to achieve desired performance objectives. Early adoption of robust governance frameworks will better enable enterprises to scale AI initiatives with confidence.

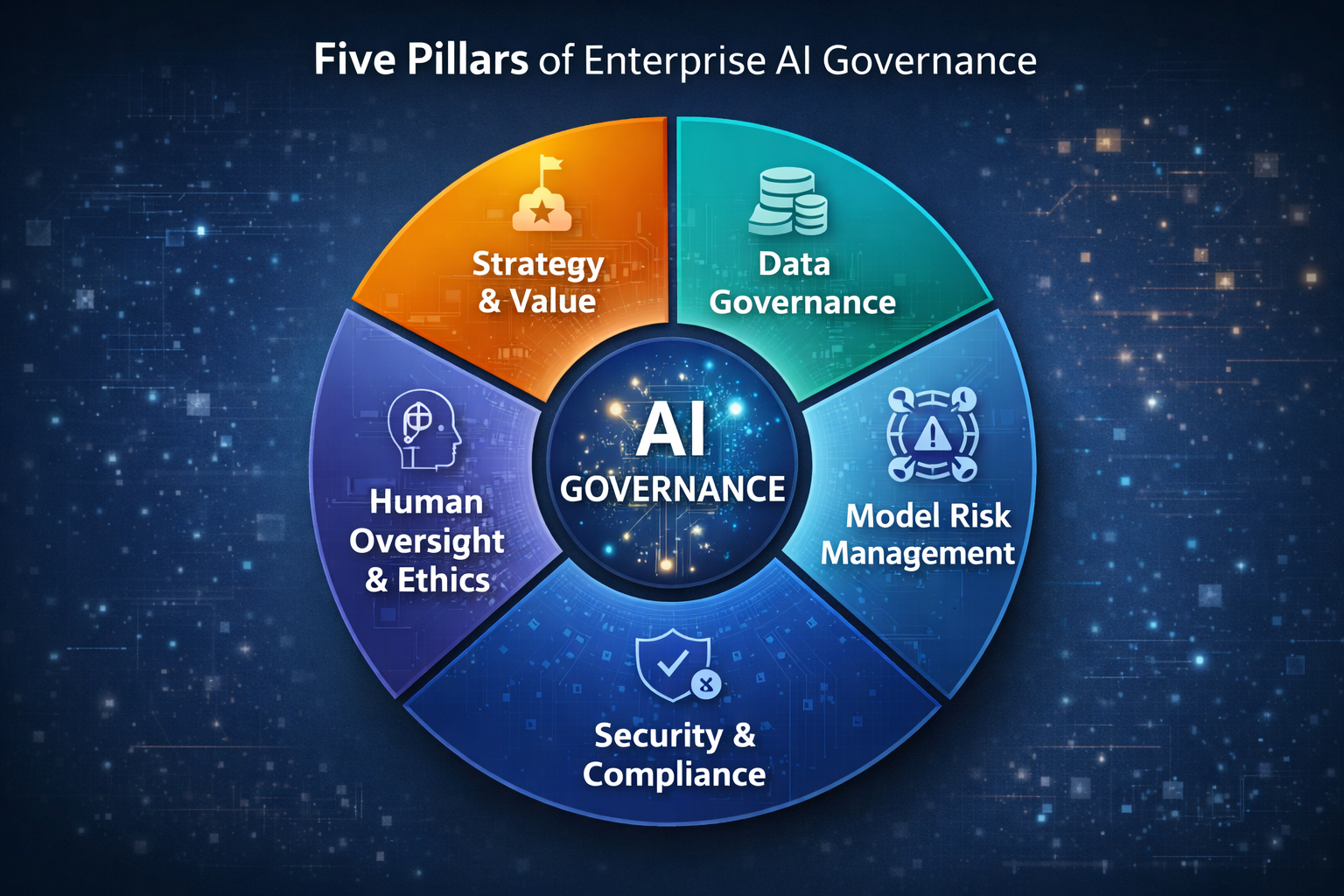

Key Dimensions of AI Governance in the Enterprise

Modern governance in the AI era extends across five critical layers.

1. Strategy & Value Alignment

AI initiatives must be governed with the same rigor as capital investment programs. Leading enterprises now require:

a) Clear business value cases before model development

b) Alignment to productivity, safety, quality, or customer outcomes

c) Defined ownership for benefits realization

In manufacturing, this often includes:

a) OEE improvement targets for predictive maintenance

b) Scrap reduction thresholds for vision-based quality systems

c) Lead-time or fill-rate KPIs for supply chain algorithms

Governance shifts from "Did we deploy the model?" to "Is the model delivering business value reliably?"

2. Data Governance as the Foundation

AI performance is inseparable from data integrity.

Modern AI governance extends traditional data controls across:

a) Data lineage and traceability

b) Consent, retention, and usage controls

c) Training vs. operational data segregation

d) Protection of intellectual property and industrial designs

More and more businesses are now creating Model Data Bills of Materials (DBoMs), which detail the datasets, sources, and transformations used for each model. This practice is essential for audits, transparency, and meeting compliance requirements.

3. Model Risk Management

Like financial risk controls, AI models now require:

a) Validation before deployment

b) Periodic bias, drift, and performance testing

c) Versioning and rollback checkpoints

d) Segregation of development and production environments

Responsible AI governance structures typically include:

a) Model Review Boards

b) Ethics & Risk Committees

c) Independent validation functions

These mechanisms ensure models remain safe, fair, accurate, and explainable.

4. Security & Cyber-Resilience

AI systems expand the attack surface:

a) Model poisoning

b) Prompt manipulation

c) Data exfiltration via inference

d) Shadow AI usage across teams

Controls now extend beyond IT infrastructure into:

a) Secure model lifecycle management

b) Access controls for training datasets

c) Monitoring of third-party AI services

d) Protection of proprietary algorithms

Within industrial settings, it is crucial to carefully manage how AI interacts with OT and plant-floor systems to avoid unforeseen effects on operations.

5. Human Oversight & Operating Governance

AI is meant to support operational decision-making rather than take its place.

Enterprises are increasingly formalizing:

a) "Human-in-the-loop" decision checkpoints

b) Role-based accountability frameworks

c) Operator training on AI interpretation and escalation

d) Exception-handling procedures for automation failures

AI governance becomes as much organizational and cultural as technical.

Practical Roadmap: How Enterprises Can Adapt Their Governance Frameworks

Organizations do not need to replace existing IT governance. Instead, they should extend and modernize it through a pragmatic, staged approach.

Step 1: Establish an AI Governance Charter

Define:

- Scope of AI use

- Ethical principles and risk posture

- Roles and decision authorities

- Accountability boundaries across IT, business, legal, and data teams

This prevents fragmented or shadow AI adoption.

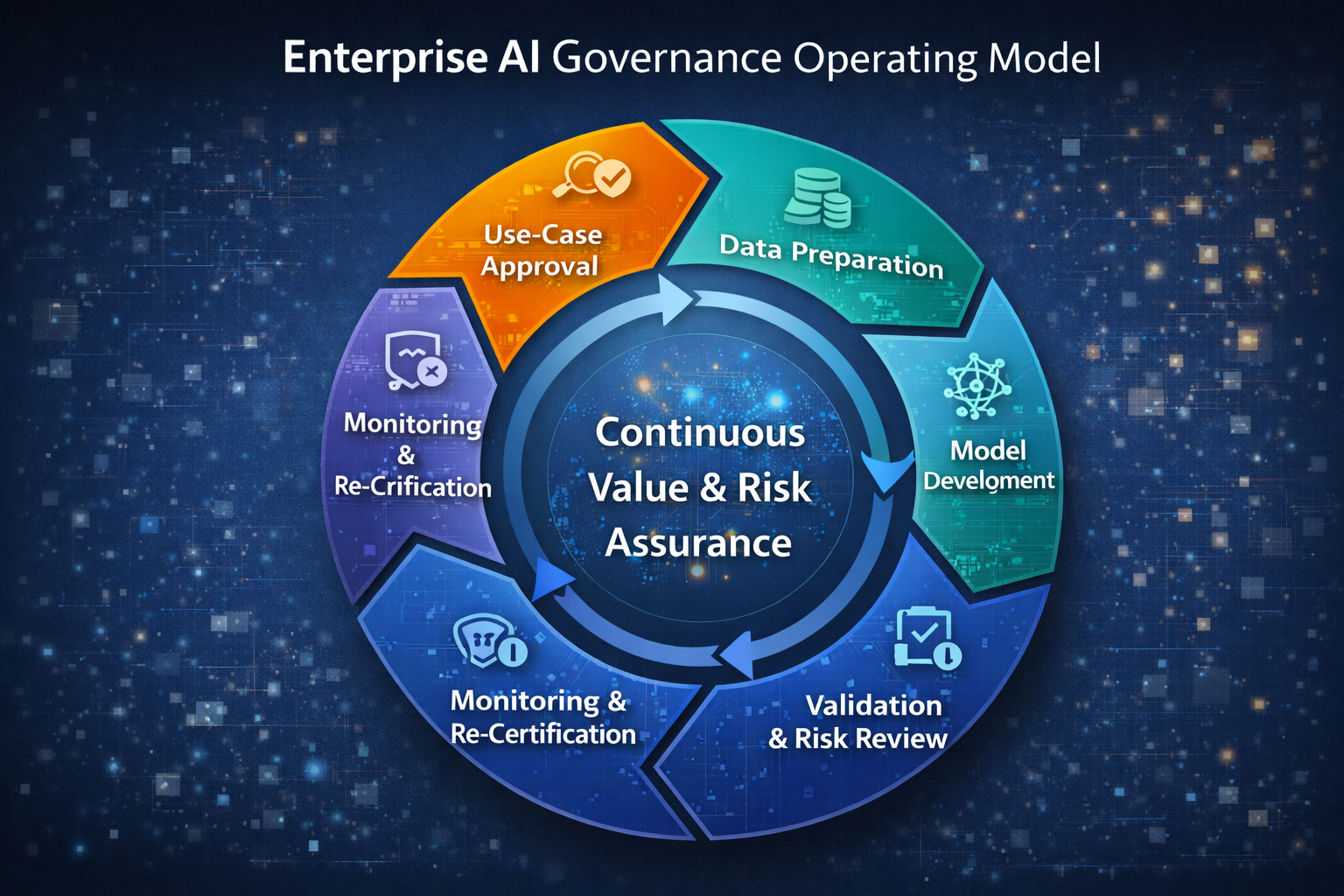

Step 2: Expand existing SDLC and change processes into ML lifecycle controls, covering:

- Use-case approval

- Model design and transparency documentation

- Validation and testing

- Controlled deployment gating

- Ongoing monitoring and review

Treat models as evolving digital assets and not as a one-time deliverable.

Step 3: Operationalize Data Controls for AI

Strengthen:

- Oversight of master data

- Assessing the quality of training data

- Policies for accessing and anonymising data

- Systems for tracking and tracing data

- Governance of data from vendors and third parties

In regulated or safety-critical environments, data provenance becomes audit-critical.

Step 4: Build Cross-Functional AI Risk Management

Integrate AI into:

- Enterprise risk registers

- Cyber and privacy assessments

- Business continuity planning

- Vendor governance frameworks

Shift from IT-only responsibility to multi-stakeholder governance.

Step 5: Institutionalize Continuous Monitoring

AI governance is not static.

Mature enterprises embed:

- KPI-linked performance dashboards

- Bias and drift alerts

- Periodic re-certification checkpoints

- Structured post-incident reviews

Governance becomes dynamic, adaptive, and insight led.

Governance as an Enabler and Not a Barrier

Many people mistakenly believe governance hinders innovation. In fact, effective AI governance helps organisations adopt new technologies responsibly by:

- Easing concerns about unforeseen risks

- Speeding up compliance reviews

- Streamlining the process for model approval

- Strengthening confidence among executives and regulators

- Providing reusable safeguards that allow quicker scaling

This approach is particularly valuable in industries like manufacturing, where it lets AI deliver operational improvements while maintaining safety, quality, and trust.

What Differentiates Successful AI Governance Programs

Organizations that successfully modernize governance tend to:

- Treat AI as a business capability, not just a technology

- Anchor governance in measurable value outcomes

- Maintain strong collaboration between IT, operations, legal, and risk

- Invest in data quality as a strategic asset

- Balance innovation freedom with structured oversight

Rather than asking "How do we control AI?", they ask:

How do we scale AI confidently, responsibly, and profitably?

That mindset represents the true evolution of technology governance in the AI era.

By IDENHIVE Team